Go: Understanding Concurrency Internals and the Runtime Scheduler

Here's where we started this book:

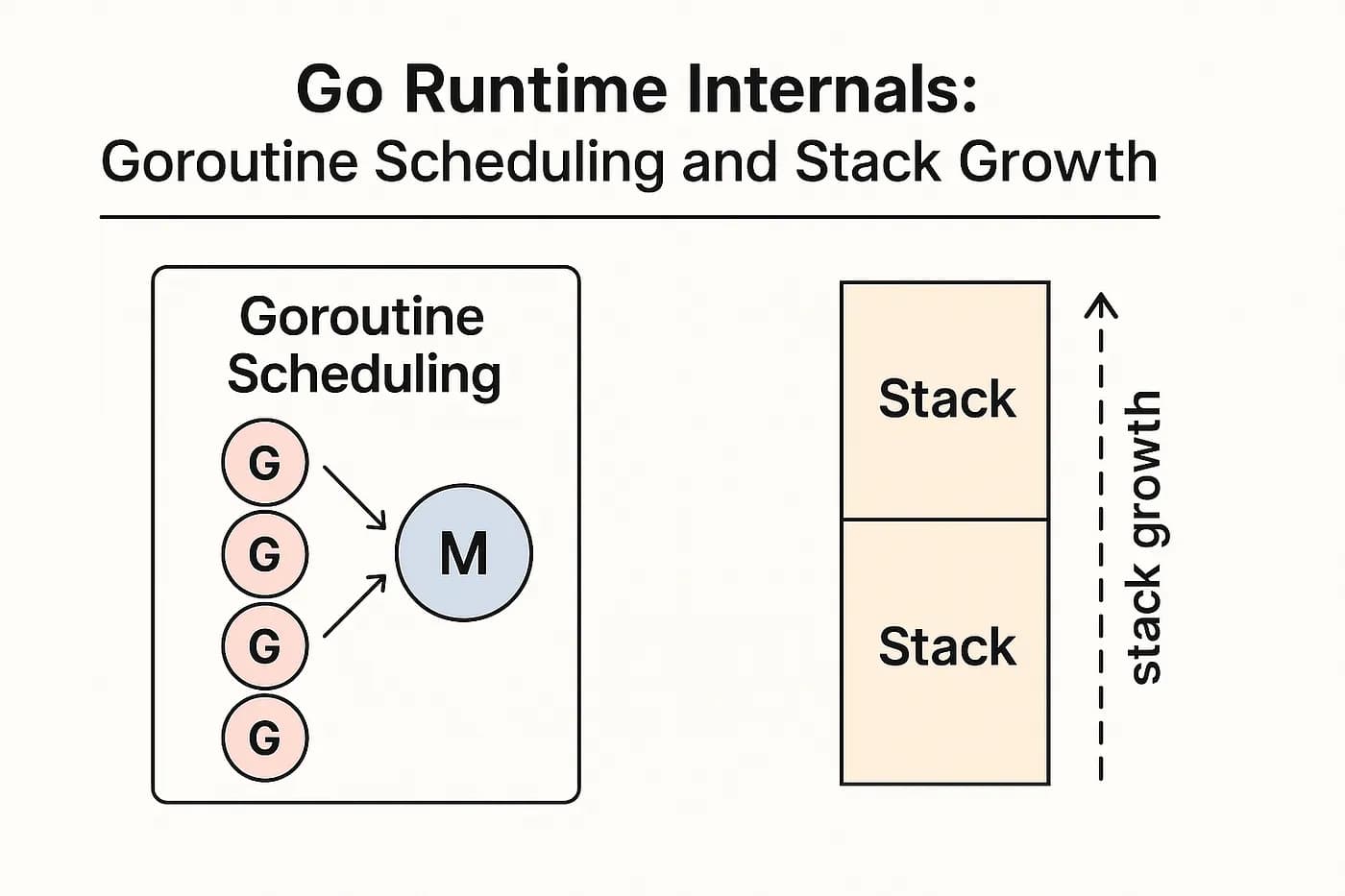

Functions that run with

goare called goroutines. The Go runtime juggles these goroutines and distributes them among operating system threads running on CPU cores. Compared to OS threads, goroutines are lightweight, so you can create hundreds or thousands of them.

That's generally correct, but it's a little too brief. In this chapter, we'll take a closer look at how goroutines work. We'll still use a simplified model, but it should help you understand how everything fits together.

Concurrency

At the hardware level, CPU cores are responsible for running parallel tasks. If a processor has 4 cores, it can run 4 instructions at the same time — one on each core.

instr A instr B instr C instr D

┌─────────┐ ┌─────────┐ ┌─────────┐ ┌─────────┐

│ Core 1 │ │ Core 2 │ │ Core 3 │ │ Core 4 │ CPU

└─────────┘ └─────────┘ └─────────┘ └─────────┘

At the operating system level, a thread is the basic unit of execution. There are usually many more threads than CPU cores, so the operating system's scheduler decides which threads to run and which ones to pause. The scheduler keeps switching between threads to make sure each one gets a turn to run on a CPU, instead of waiting in line forever. This is how the operating system handles concurrency.

┌──────────┐ ┌──────────┐

│ Thread E │ │ Thread F │ OS

└──────────┘ └──────────┘

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Thread A │ │ Thread B │ │ Thread C │ │ Thread D │

└──────────┘ └──────────┘ └──────────┘ └──────────┘

│ │ │ │

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Core 1 │ │ Core 2 │ │ Core 3 │ │ Core 4 │ CPU

└──────────┘ └──────────┘ └──────────┘ └──────────┘

At the Go runtime level, a goroutine is the basic unit of execution. The runtime scheduler runs a fixed number of OS threads, often one per CPU core. There can be many more goroutines than threads, so the scheduler decides which goroutines to run on the available threads and which ones to pause. The scheduler keeps switching between goroutines to make sure each one gets a turn to run on a thread, instead of waiting in line forever. This is how Go handles concurrency.

┌─────┐┌─────┐┌─────┐┌─────┐┌─────┐┌─────┐

│ G15 ││ G16 ││ G17 ││ G18 ││ G19 ││ G20 │

└─────┘└─────┘└─────┘└─────┘└─────┘└─────┘

┌─────┐ ┌─────┐ ┌─────┐ ┌─────┐

│ G11 │ │ G12 │ │ G13 │ │ G14 │ Go runtime

└─────┘ └─────┘ └─────┘ └─────┘

│ │ │ │

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Thread A │ │ Thread B │ │ Thread C │ │ Thread D │ OS

└──────────┘ └──────────┘ └──────────┘ └──────────┘

The Go runtime scheduler doesn't decide which threads run on the CPU — that's the operating system scheduler's job. The Go runtime makes sure all goroutines run on the threads it manages, but the OS controls how and when those threads actually get CPU time.

Goroutine Scheduler

The scheduler's job is to run M goroutines on N operating system threads, where M can be much larger than N. Here's a simple way to do it:

- Put all goroutines in a queue.

- Take N goroutines from the queue and run them.

- If a running goroutine gets blocked (for example, waiting to read from a channel or waiting on a mutex), put it back in the queue and run the next goroutine from the queue.

Take goroutines G11-G14 and run them:

┌─────┐┌─────┐┌─────┐┌─────┐┌─────┐┌─────┐

│ G15 ││ G16 ││ G17 ││ G18 ││ G19 ││ G20 │ queue

└─────┘└─────┘└─────┘└─────┘└─────┘└─────┘

┌─────┐ ┌─────┐ ┌─────┐ ┌─────┐

│ G11 │ │ G12 │ │ G13 │ │ G14 │ running

└─────┘ └─────┘ └─────┘ └─────┘

│ │ │ │

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Thread A │ │ Thread B │ │ Thread C │ │ Thread D │

└──────────┘ └──────────┘ └──────────┘ └──────────┘

Goroutine G12 got blocked while reading from the channel. Put it back in the queue and replace it with G15:

┌─────┐┌─────┐┌─────┐┌─────┐┌─────┐┌─────┐

│ G16 ││ G17 ││ G18 ││ G19 ││ G20 ││ G12 │ queue

└─────┘└─────┘└─────┘└─────┘└─────┘└─────┘

┌─────┐ ┌─────┐ ┌─────┐ ┌─────┐

│ G11 │ │ G15 │ │ G13 │ │ G14 │ running

└─────┘ └─────┘ └─────┘ └─────┘

│ │ │ │

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Thread A │ │ Thread B │ │ Thread C │ │ Thread D │

└──────────┘ └──────────┘ └──────────┘ └──────────┘

But there are a few things to keep in mind.

Starvation

Let's say goroutines G11–G14 are running smoothly without getting blocked by mutexes or channels. Does that mean goroutines G15–G20 won't run at all and will just have to wait (starve) until one of G11–G14 finally finishes? That would be unfortunate.

That's why the scheduler checks each running goroutine roughly every 10 ms to decide if it's time to pause it and put it back in the queue. This approach is called preemptive scheduling: the scheduler can interrupt running goroutines when needed so others have a chance to run too.

System Calls

The scheduler can manage a goroutine while it's running Go code. But what happens if a goroutine makes a system call, like reading from disk? In that case, the scheduler can't take the goroutine off the thread, and there's no way to know how long the system call will take. For example, if goroutines G11–G14 in our example spend a long time in system calls, all worker threads will be blocked, and the program will basically "freeze".

To solve this problem, the scheduler starts new threads if the existing ones get blocked in a system call. For example, here's what happens if G11 and G12 make system calls:

┌─────┐┌─────┐┌─────┐┌─────┐

│ G17 ││ G18 ││ G19 ││ G20 │ queue

└─────┘└─────┘└─────┘└─────┘

┌─────┐ ┌─────┐ ┌─────┐ ┌─────┐

│ G15 │ │ G16 │ │ G13 │ │ G14 │ running

└─────┘ └─────┘ └─────┘ └─────┘

│ │ │ │

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Thread E │ │ Thread F │ │ Thread C │ │ Thread D │

└──────────┘ └──────────┘ └──────────┘ └──────────┘

│ │

┌──────────┐ ┌──────────┐

│ Thread A │ │ Thread B │ blocked in syscall

└──────────┘ └──────────┘

The scheduler created two new threads (E and F) to keep running goroutines while threads A and B are blocked in system calls. Once the system calls finish, threads A and B can be reused for other goroutines.

GOMAXPROCS

The GOMAXPROCS environment variable (or the runtime.GOMAXPROCS function) controls how many OS threads the Go scheduler can use to run goroutines. By default, it's set to the number of CPU cores.

For example, on a 4-core machine, GOMAXPROCS is 4 by default. This means the scheduler can use up to 4 OS threads to run goroutines. If you set GOMAXPROCS to 2, the scheduler will only use 2 threads, even if you have 4 cores.

You usually don't need to change GOMAXPROCS. The default value works well for most programs. However, if your program spends a lot of time waiting (for example, waiting for network I/O), you might benefit from increasing GOMAXPROCS to allow more goroutines to run concurrently.

Concurrency Primitives

The Go scheduler interacts with various concurrency primitives:

Channels: When a goroutine blocks on a channel operation, the scheduler moves it out of the running state and puts it in a waiting state. When the channel operation can proceed, the scheduler moves the goroutine back to the runnable state.

Mutexes: When a goroutine tries to lock a mutex that's already locked, the scheduler blocks the goroutine until the mutex becomes available.

WaitGroups: When a goroutine calls

Wait()on a wait group, the scheduler blocks it until the wait group counter reaches zero.Timers and Tickers: The scheduler manages timers and tickers, waking up goroutines when their time expires.

System Calls: When a goroutine makes a blocking system call, the scheduler may create a new OS thread to keep other goroutines running.

Scheduler Metrics

The Go runtime provides several metrics that can help you understand how the scheduler is performing:

runtime.NumGoroutine(): Returns the number of goroutines that currently exist.runtime.NumCPU(): Returns the number of logical CPUs available.runtime.GOMAXPROCS(): Returns the current value of GOMAXPROCS.

You can also use the runtime/metrics package to get more detailed metrics about the scheduler and goroutines.

Profiling

Profiling helps you understand where your program spends time and memory. Go provides built-in profiling support through the runtime/pprof package.

CPU Profile

A CPU profile shows which functions consume the most CPU time. To collect a CPU profile, you can use the go test command with the -cpuprofile flag:

go test -cpuprofile=cpu.prof ./...

Or you can enable the profiling HTTP server in your program:

import (

_ "net/http/pprof"

"net/http"

)

func main() {

go func() {

log.Println(http.ListenAndServe("localhost:6060", nil))

}()

// ... rest of your program

}

Then you can collect profiles by visiting URLs like:

http://localhost:6060/debug/pprof/profile?seconds=30for CPU profilehttp://localhost:6060/debug/pprof/heapfor heap profilehttp://localhost:6060/debug/pprof/goroutinefor goroutine profile

Heap Profile

A heap profile shows memory allocations. You can collect it similarly:

go test -memprofile=heap.prof ./...

Block Profile

A block profile shows where goroutines are blocked (waiting on channels, mutexes, etc.). To enable it:

runtime.SetBlockProfileRate(1)

Then collect the profile:

go tool pprof http://localhost:6060/debug/pprof/block

Mutex Profile

A mutex profile shows contention on mutexes. To enable it:

runtime.SetMutexProfileFraction(1)

Then collect the profile:

go tool pprof http://localhost:6060/debug/pprof/mutex

Viewing Profiles

You can view profiles using the go tool pprof command:

go tool pprof -http=localhost:8080 cpu.prof

This opens a web interface where you can view:

- Flame graphs: Show the call hierarchy and resource usage

- Source view: Shows the exact lines of code

- Top functions: Lists functions by resource usage

Tracing

Tracing records certain types of events while the program is running, mainly those related to concurrency and memory:

- goroutine creation and state changes;

- system calls;

- garbage collection;

- heap size changes;

- and more.

If you enabled the profiling server as described earlier, you can collect a trace using this URL:

http://localhost:6060/debug/pprof/trace?seconds=N

Trace files can be quite large, so it's better to use a small N value.

After tracing is complete, you'll get a binary file that you can open in the browser using the go tool trace utility:

go tool trace -http=localhost:6060 trace.out

In the trace web interface, you'll see each goroutine's "lifecycle" on its own line. You can zoom in and out of the trace with the W and S keys, and you can click on any event to see more details.

You can also collect a trace manually:

import (

"os"

"runtime/trace"

)

func main() {

// Start tracing and stop it when main exits.

file, _ := os.Create("trace.out")

defer file.Close()

trace.Start(file)

defer trace.Stop()

// The rest of the program code.

// ...

}

Flight Recorder

Flight recording is a tracing technique that collects execution data, such as function calls and memory allocations, within a sliding window that's limited by size or duration. It helps to record traces of interesting program behavior, even if you don't know in advance when it will happen.

The trace.FlightRecorder type (Go 1.25+) implements a flight recorder in Go. It tracks a moving window over the execution trace produced by the runtime, always containing the most recent trace data.

Here's an example of how you might use it.

First, configure the sliding window:

// Configure the flight recorder to keep

// at least 5 seconds of trace data,

// with a maximum buffer size of 3MB.

// Both of these are hints, not strict limits.

cfg := trace.FlightRecorderConfig{

MinAge: 5 * time.Second,

MaxBytes: 3 << 20, // 3MB

}

Then create the recorder and start it:

// Create and start the flight recorder.

rec := trace.NewFlightRecorder(cfg)

rec.Start()

defer rec.Stop()

Continue with the application code as usual:

// Simulate some workload.

done := make(chan struct{})

go func() {

defer close(done)

const n = 1 << 20

var s []int

for range n {

s = append(s, rand.IntN(n))

}

fmt.Printf("done filling slice of %d elements\n", len(s))

}()

<-done

Finally, save the trace snapshot to a file when an important event occurs:

// Save the trace snapshot to a file.

file, _ := os.Create("/tmp/trace.out")

defer file.Close()

n, _ := rec.WriteTo(file)

fmt.Printf("wrote %dB to trace file\n", n)

done filling slice of 1048576 elements

wrote 8441B to trace file

Use go tool trace to view the trace in the browser:

go tool trace -http=localhost:6060 /tmp/trace.out

Summary

Now you can see how challenging the Go scheduler's job is. Fortunately, most of the time you don't need to worry about how it works behind the scenes — sticking to goroutines, channels, select, and other synchronization primitives is usually enough.

Key points to remember:

- CPU cores execute instructions in parallel at the hardware level.

- OS threads are managed by the operating system scheduler.

- Goroutines are managed by the Go runtime scheduler.

- The Go scheduler uses preemptive scheduling to prevent starvation.

- System calls may require creating additional OS threads.

- GOMAXPROCS controls the number of OS threads used by the scheduler.

- Profiling and tracing help you understand scheduler behavior and performance.

The Go scheduler is a sophisticated piece of software that handles the complex task of managing thousands of goroutines efficiently. Understanding its internals can help you write better concurrent programs, but in most cases, you can rely on it to do its job without intervention.