Grafana K6 Reference Guide: Complete Guide for Performance Testing Engineers

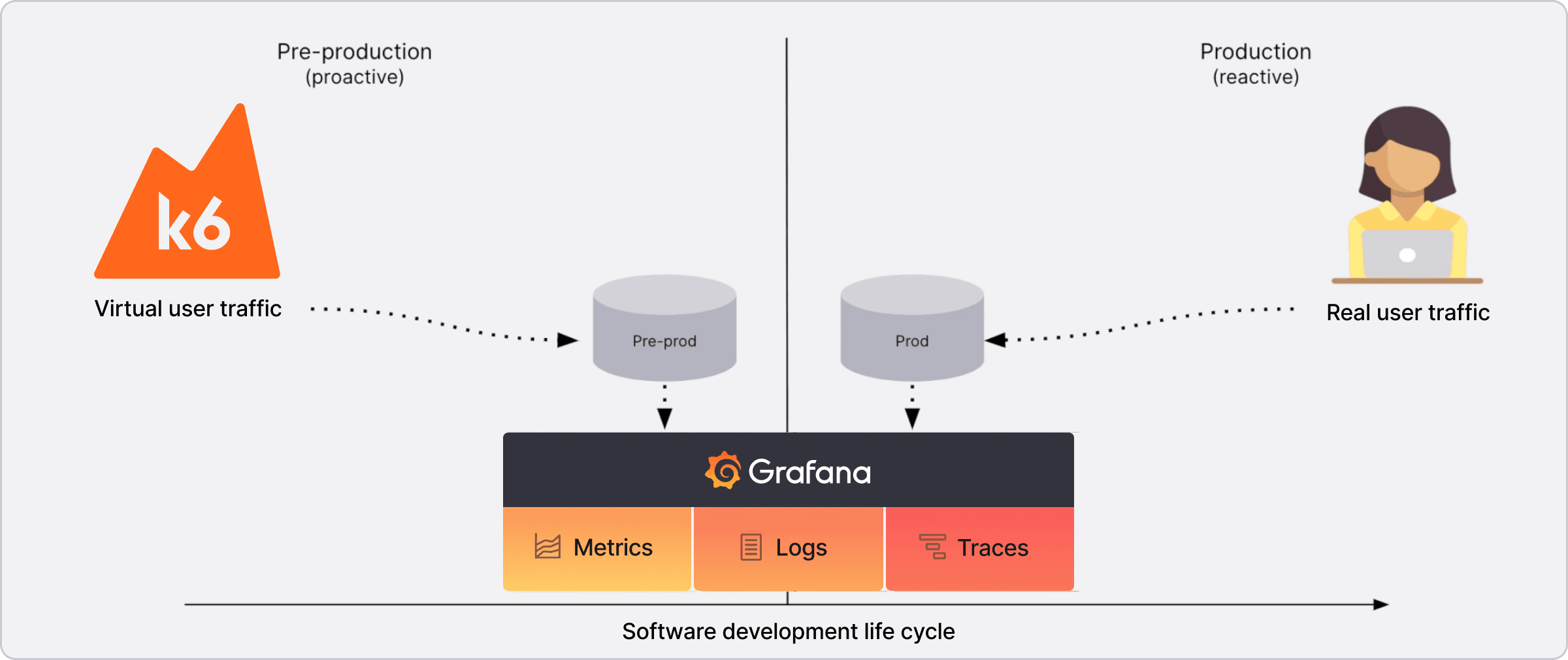

Introduction to Grafana K6

Grafana K6 is an open-source tool designed for performance testing. It's great for testing APIs, microservices, and websites at scale, providing developers and testers insights into system performance. This cheat sheet will cover the key aspects every performance engineer should know to get started with Grafana K6.

What is Grafana K6?

Grafana K6 is a modern load testing tool for developers and testers that makes performance testing simple, scalable, and easy to integrate into your CI pipeline.

When to use it?

- Load testing

- Stress testing

- Spike testing

- Performance bottleneck detection

- API testing

- Browser testing

- Chaos engineering

Grafana K6 Cheat Sheet: Essential Aspects

Installation

Install Grafana K6 via Homebrew or Docker:

brew install k6

# Or with Docker

docker run -i grafana/k6 run - <script.js

Basic Test with a Public REST API

Here's how to run a simple test using a public REST API:

import http from "k6/http";

import { check, sleep } from "k6";

// Define the API endpoint and expected response

export default function () {

const res = http.get("https://jsonplaceholder.typicode.com/posts/1");

// Define the expected response

const expectedResponse = {

userId: 1,

id: 1,

title:

"sunt aut facere repellat provident occaecati excepturi optio reprehenderit",

body: "quia et suscipit\nsuscipit recusandae consequuntur expedita et cum\nreprehenderit molestiae ut ut quas totam\nnostrum rerum est autem sunt rem eveniet architecto",

};

// Assert the response is as expected

check(res, {

"status is 200": (r) => r.status === 200,

"response is correct": (r) =>

JSON.stringify(JSON.parse(r.body)) === JSON.stringify(expectedResponse),

});

sleep(1);

}

Running the Test and Utilization of Web Dashboard

To run the test and view the results in a web dashboard, we can use the following command:

K6_WEB_DASHBOARD=true K6_WEB_DASHBOARD_EXPORT=html-report.html k6 run ./src/rest/jsonplaceholder-api-rest.js

This will generate a report in the reports folder with the name html-report.html.

But we also can see the results in the web dashboard by accessing the following URL:

http://127.0.0.1:5665/

Once we access the URL, we can see the results in real time of the test in the web dashboard.

Test with a Public GraphQL API

Example using a public GraphQL API.

If you don't know what is a GraphQL API, you can visit the following URL: What is GraphQL?.

For more information about the GraphQL API we are going to use, you can visit the documentation of the following URL: GraphQL Pokémon.

For more information about how to test GraphQL APIs, you can visit the following URL: GraphQL Testing.

This is a simple test to get a pokemon by name and check if the response is successful:

import http from "k6/http";

import { check } from "k6";

// Define the query and variables

const query = `

query getPokemon($name: String!) {

pokemon(name: $name) {

id

name

types

}

}`;

const variables = {

name: "pikachu",

};

// Define the test function

export default function () {

const url = "https://graphql-pokemon2.vercel.app/";

const payload = JSON.stringify({

query: query,

variables: variables,

});

// Define the headers

const headers = {

"Content-Type": "application/json",

};

// Make the request

const res = http.post(url, payload, { headers: headers });

// Define the expected response

const expectedResponse = {

data: {

pokemon: {

id: "UG9rZW1vbjowMjU=",

name: "Pikachu",

types: ["Electric"],

},

},

};

// Assert the response is as expected

check(res, {

"status is 200": (r) => r.status === 200,

"response is correct": (r) =>

JSON.stringify(JSON.parse(r.body)) === JSON.stringify(expectedResponse),

});

}

Best Practices for Structuring Performance Projects

Centralized Configuration

Define global configuration options such as performance thresholds, the number of virtual users (VU), and durations in one place for easy modification and maintenance.

Example configuration file:

// ./src/config/options.js

export const options = {

stages: [

{ duration: '30s', target: 20 },

{ duration: '1m', target: 50 },

{ duration: '30s', target: 0 },

],

thresholds: {

http_req_duration: ['p(95)<500'],

http_req_failed: ['rate<0.01'],

},

};

Modular Code Organization

Break down test scripts into reusable functions and modules. This makes the code more maintainable and easier to understand.

Example of modular requests:

// ./src/utils/requests-jsonplaceholder.js

import http from "k6/http";

export function getPost(id) {

return http.get(`https://jsonplaceholder.typicode.com/posts/${id}`);

}

export function createPost(post) {

return http.post(

"https://jsonplaceholder.typicode.com/posts",

JSON.stringify(post),

{ headers: { "Content-Type": "application/json" } }

);

}

Then use these functions in your test script:

// ./src/rest/jsonplaceholder-api-rest.js

import { check, sleep } from "k6";

import { getPost } from "../utils/requests-jsonplaceholder.js";

import { options } from "../config/options.js";

export { options };

// Function to test GET request

function testGetPost(id) {

let res = getPost(id);

check(res, {

"GET status is 200": (r) => r.status === 200,

"GET response has correct id": (r) => JSON.parse(r.body).id === id,

});

}

// Main function

export default function () {

testGetPost(1);

sleep(1);

}

In the same way as for the example of API REST, we can improve our script by creating more atomic functions that we can reuse to create more complex scenarios in the future if necessary, making it simpler to understand what our test script does.

There is still a better way to optimize and have better parameterization of the response and request results.

Dynamic Data and Parameterization

Use dynamic data to simulate more realistic scenarios and load different data sets. K6 allows us to use shared arrays to load data from a file. Shared arrays are a way to store data that can be accessed by all VUs.

We can create a users-config.js file to load the users data from a JSON file users.json:

[

{ "id": 1 },

{ "id": 2 },

{ "id": 3 },

{ "id": 4 },

{ "id": 5 },

{ "id": 6 },

{ "id": 7 },

{ "id": 8 },

{ "id": 9 },

{ "id": 10 }

]

// ./src/config/users-config.js

import { SharedArray } from 'k6/data';

export const users = new SharedArray('User data', function () {

return JSON.parse(open('../data/users.json')); // Load from a file

});

And then we can use it in our test script jsonplaceholder-api-rest.js:

// ./src/rest/jsonplaceholder-api-rest.js

import { check, sleep } from "k6";

import { getPost } from "../utils/requests-jsonplaceholder.js";

import { options } from "../config/options.js";

import { users } from "../config/users-config.js";

// Function to test GET request

function testGetPost(id) {

let res = getPost(id);

check(res, {

"GET status is 200": (r) => r.status === 200,

"GET response has correct id": (r) => JSON.parse(r.body).id === id,

});

}

// Main function

export default function () {

const user = users[Math.floor(Math.random() * users.length)];

testGetPost(user.id);

sleep(1);

}

Project Structure

A well-organized project structure helps in maintaining and scaling your tests. Here's a suggested folder structure:

/project-root

│

├── /src

│ ├── /graphql

│ │ ├── pokemon-graphql-test.js # Test for GraphQL Pokémon API

│ │ ├── other-graphql-test.js # Other GraphQL tests

│ │

│ ├── /rest

│ │ ├── jsonplaceholder-api-rest.js # REST API test for JSONPlaceholder

│ │ ├── other-rest-test.js # Another REST API test

│ │

│ └── performance-scenarios.js # Script combining multiple performance tests

│

├── /utils

│ ├── requests-graphql-pokemon.js # Reusable functions for GraphQL requests

│ ├── requests-jsonplaceholder.js # Reusable functions for REST requests

│ ├── checks.js # Reusable validation functions

│ ├── constants.js # Global constants, like URLs or headers

│

├── /config

│ ├── options.js # Global configuration options

│ ├── users-config.js # Configuration for users data

│

├── /reports

│ └── results.html # Output file for results (generated after running tests)

│

├── /data

│ └── users.json # Users data

│

├── README.md # Project documentation

└── .gitignore # Files and folders ignored by Git

This structure helps in keeping your project organized, scalable, and easy to maintain, avoiding clutter in the project root.

Another option would be to group test scripts into folders by functionality. You can test and compare what makes the most sense for your context. For example, if your project is about a wallet that makes transactions, you could have a folder for each type of transaction (deposit, withdrawal, transfer, etc.) and inside each folder you could have the test scripts for that specific transaction:

/project-root

│

├── /src

│ ├── /deposit

│ │ ├── deposit-test-1.js # Test for deposit

│ │ ├── deposit-test-2.js # Another deposit test

│ │

│ ├── /withdrawal

│ │ ├── withdrawal-test-1.js # Test for withdrawal

│ │ ├── withdrawal-test-2.js # Another withdrawal test

│ │

│ ├── /transfer

│ │ ├── transfer-test-1.js # Test for transfer

│ │ ├── transfer-test-2.js # Another transfer test

│ │

│ └── performance-scenarios.js # Script combining multiple performance tests

│

├── /utils

│ ├── requests-deposit.js # Reusable functions for deposit

│ ├── requests-withdrawal.js # Reusable functions for withdrawal

│ ├── requests-transfer.js # Reusable functions for transfer

│ ├── checks.js # Reusable validation functions

│ ├── constants.js # Global constants, like URLs or headers

│

├── /config

│ ├── options.js # Global configuration options

│ ├── users-config.js # Configuration for users data

│ ├── accounts-config.js # Configuration for accounts data

│

├── /reports

│ └── results.html # Output file for results (generated after running tests)

│

├── /data

│ └── users.json # Users data

│ └── accounts.json # Accounts data

│

├── README.md # Project documentation

└── .gitignore # Files and folders ignored by Git

In this second example, we have a more complex data structure, but we can still reuse the same request functions that we created for the first example.

Summary

Performance testing with K6 is critical for identifying bottlenecks and ensuring application scalability. By following best practices such as modularizing code, centralizing configurations, and using dynamic data, engineers can create maintainable and scalable performance testing scripts.

Key takeaways:

- Grafana K6 is a modern load testing tool that integrates well with CI/CD pipelines.

- Modular code organization makes tests more maintainable and reusable.

- Centralized configuration simplifies managing test parameters and thresholds.

- Dynamic data enables realistic test scenarios with parameterized inputs.

- Well-structured projects scale better and are easier to maintain.

- Web dashboard provides real-time visualization of test results.

By following these practices, performance engineers can build robust, scalable test suites that provide valuable insights into system performance.